GSA SER Verified Lists

The Foundation of Automated Link Building

Every search engine optimization professional using GSA Search Engine Ranker eventually encounters the same bottleneck. The tool’s raw power depends entirely on the quality of its input data. Without a solid foundation, campaigns stall, verification rates plummet, and resources burn through proxy bandwidth with little to show. This is precisely why GSA SER verified lists have become a non-negotiable asset for serious practitioners.

A target list that hasn't been verified is functionally useless. It contains dead domains, sites that have hardened their security against automated submissions, platforms that no longer accept the specific engine type, and URLs that simply time out. Sending thousands of threads against such a list generates nothing but log errors. Curated and freshly confirmed lists transform the software from a noisy experiment into a precision instrument.

What Defines a Truly Verified List

The term gets thrown around loosely in forums and marketplaces, so clarity on the verification criteria matters. A raw scraped list is not a verified list, and a list tested once six months ago doesn’t qualify either. Real GSA SER verified lists go through a multi-stage refinement process that answers specific technical questions for each entry.

Platform Acceptance Rate

The primary check confirms that the target URL still solves a captcha and proceeds past the initial form submission. Many sites appear functional but silently discard automated payloads. A verified list only retains URLs where a test submission actually reached the moderation queue or registered a successful handshake. This weeds out honeypots and completely blocked domains immediately.

Engine and Category Sorting

Verified doesn’t just mean alive. It means the platform correctly maps to the engine selected in GSA SER. A directory that accepts links but gets misclassified as a blog comment engine wastes attempts. Properly prepared lists tag each entry with the exact engine category—article directories, social bookmarks, wiki, Web 2.0, forum profiles—so that the software doesn’t attempt impossible post types. This granular sorting dramatically improves the live-link conversion rate.

Contextual and Language Alignment

Generic verification often ignores niche relevance. An advanced verified list includes metadata about the site’s language, its general topic, and sometimes its outbound link profile. Throwing unrelated anchors at a Czech-language medical wiki generates no value and increases the chance of negative spam signals. The best GSA SER verified lists let you filter by niche before you even import, saving the later pain of disavowing toxic domains.

Why Freshness Overrides Volume Every Time

A common mistake is hoarding massive archives. A single 500,000-entry list from last year performs worse than a tight 15,000-entry list verified within the last 72 hours. Webmasters change their comment systems, delete subdomains, and update their CMS on a daily cycle. Domain registrations lapse. HTTP headers shift from open to restrictive. The half-life of a target URL is brutally short in the world of automated posting.

Using stale data forces the software to chew through proxies and retries, hitting dead endpoints repeatedly. This slows the whole operation and leaves a footprint that gets IP addresses burned across entire subnets. Purchasing or building routine fresh GSA SER verified lists ensures that each thread spends its time on hosts that actually respond with a 200 OK and a captcha, not a 404 or a silent firewall drop.

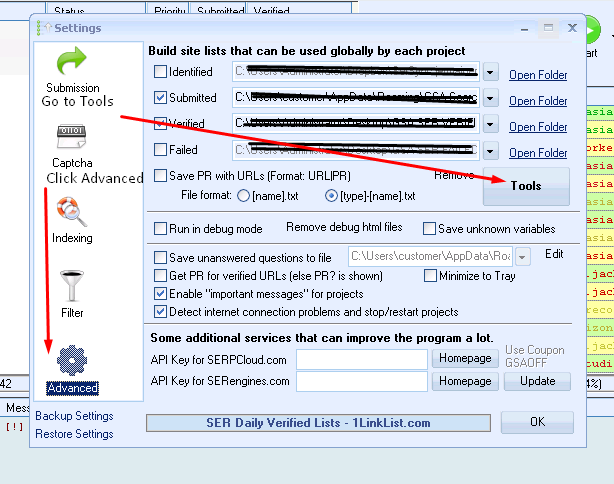

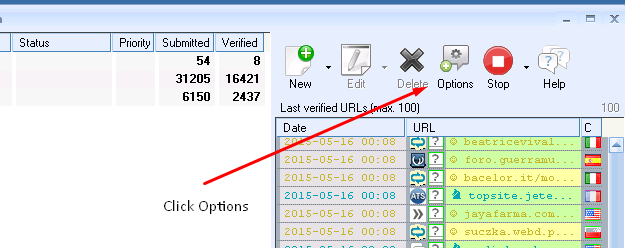

Building Your Own Internal Verification Pipeline

Relying solely on third-party providers creates a single point of failure. The most resilient setups layer external verified data with an internal re-verification routine. After sourcing a base list, you run it through a dedicated GSA SER instance set only to test mode, with conservative thread counts and high-quality private proxies.

The internal process logs every URL that reaches the “Submitted successfully†or “Verification needed†stage. Sites that fail three consecutive daily tests get culled automatically. This feedback loop produces a constantly self-cleaning asset. Over weeks, the list shrinks but the success rate approaches ninety percent or higher. Feeding these internally confirmed entries back into production campaigns creates a flywheel that independent scraped lists can’t match.

Proxy and Captcha Pairing During Verification

Even the perfect URL will fail if the verification test uses abused IPs. A verified list from a provider who tested with residential or mobile proxies holds different value than one tested through datacenter IPs with a poor reputation. When evaluating GSA SER verified lists, always ask what type of infrastructure was used during the confirmation sweep. The answer often explains why one list radically outperforms another despite similar claimed volumes.

Avoiding the Trap of Over-Optimized Footprints

Aggressively verified lists concentrate on platforms known to accept auto-generated accounts. While that sounds ideal, it can leave a recognizable pattern. When every backlink comes from the same network of article directories that remain open, search engines notice the anomaly. The antidote is diversity in the verification sources. Mixing lists prepared on different server environments, with varied user agents, across multiple language packs, disperses the footprint.

Some marketers rotate through four or five independent verified list vendors each month, never letting a single source dominate their campaign. The approach keeps the profile looking organic, even if each individual list underwent deep verification. Combining this with a percentage of unverified, cruftier targets mimics the messy link acquisition pattern of a real business and passes manual review more easily.

Where Verified Data Moves the Needle Fastest

Certain campaign types depend on list quality more than others. Highly more info competitive short-tail anchor projects burn through targets quickly and can’t tolerate low success rates. In these situations, pulling from a premium pool of GSA SER verified lists—sliced by dofollow/nofollow ratio and OBL count—means you saturate the available neutral targets rapidly without alerting spam filters.

Lower-competition local and tiered link building still benefits, but the tolerance for unverified noise is higher. Even there, starting with a clean, verified foundation cuts project time in half. The least obvious advantage shows up in expired domain crawling. Verified lists often include pages on recently expired domains that hold residual authority, letting you place contextual links before the domains are snapped up by registrars or dropcatchers.

Maintenance Habits That Keep Lists Profitable

Acquiring a sterling list one time is not enough. Web ecosystems decay fast. A maintenance cadence—ideally weekly re-verification sweeps—keeps the asset sharp. Mark owners who automate this through scheduled tasks and health-check scripts rarely face the campaign fatigue that plagues manual operators. They export the cleaned list, archive the removed entries for forensic analysis, and reload the next batch without any human intervention.

This discipline turns GSA SER verified lists from a static product into a living library. Over months, the library identifies patterns about which types of sites withstand the test of time, feeding that intelligence back into the scraping and prospecting phase. The loop creates a compounding advantage that steadily improves the cost-per-acquisition of every successful backlink.

Ultimately, the software is just an engine. The fuel—the verified lists—determines whether you arrive at page one or idle in the sandbox. Treating list verification as a persistent, granular practice rather than a one-time purchase is what separates high-yield campaigns from endless rounds of troubleshooting.